AI-Powered Document Q&A App with LangChain & Chroma VectorDB OpenAI Agent Building + RAG + LangChain Claude Cursor Development

Imagine having a smart assistant that instantly answers questions from any document — PDF, Excel, Word, or text. 🌊 I built an AI-powered Document Q&A app where users upload files, and the system extracts, chunks, and stores data in a Chroma vector database. Using LangChain’s RAG pipeline with OpenAI embeddings, it retrieves the most relevant info and responds in natural language. 🚀 This project highlights my skills in full-stack dev, AI integration, vector DBs, and building user-friendly, real-world AI apps. app also crawls pages using Apify scrapers

View Live Project

About the Project

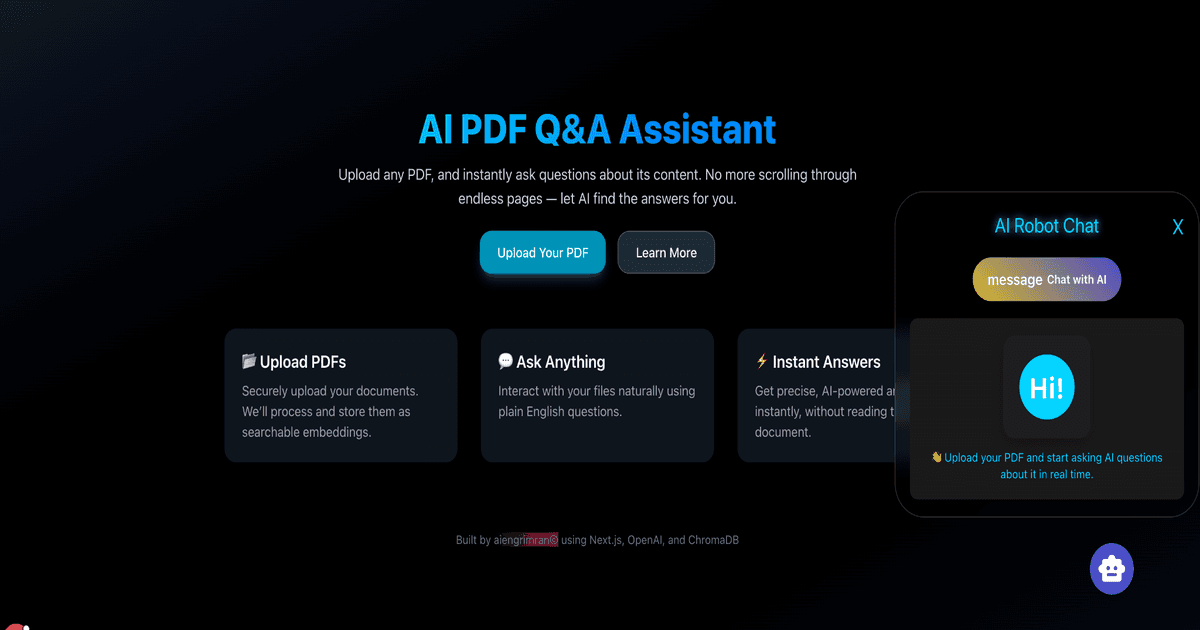

Built an AI-powered Document Q&A application that allows users to upload any document — PDF, Excel, Word, or plain text — and ask natural language questions, receiving accurate answers drawn directly from the document content.

My approach was to implement a full RAG (Retrieval Augmented Generation) pipeline rather than feeding entire documents to an LLM — which would be expensive and hit context limits with large files. I chunked documents into overlapping segments, generated embeddings using OpenAI's embedding model, and stored them in a Chroma vector database. At query time, the most semantically relevant chunks are retrieved and passed to the LLM as context, keeping costs low and accuracy high.

LangChain was used to orchestrate the pipeline — handling document loaders for different file types, the chunking strategy, embedding generation, vector retrieval, and prompt construction in a clean, modular chain. The application also integrated Apify scrapers to crawl web pages on demand, extending the Q&A capability beyond uploaded files to live web content.

The result was a full-stack AI application demonstrating practical RAG architecture — the same pattern used in enterprise knowledge management tools — built with production-ready components rather than toy implementations.