Hand Sign Detection Using YOLOv8 & FastAPI

This interactive AI project detects the number of fingers a user shows and visualizes the result with illuminated bulbs on screen. Using a YOLOv8 model trained to recognize fingertip positions, the system counts raised fingers in real time (1–5). The FastAPI backend, hosted on Hugging Face Spaces, processes uploaded or captured hand images and returns both the detected count and an annotated image. On the React.js frontend, users see up to five bulbs light up dynamically — one for each detected finger — with smooth transitions and theme support (light/dark). This creates an engaging, visually intuitive demo of computer vision capabilities.

About the Project

Built a real-time hand sign detection application that counts raised fingers from camera input and visualises the result as illuminated bulbs on screen — combining computer vision, a FastAPI backend, and an interactive React.js frontend.

My approach started with data collection and annotation using Label Studio to create a custom dataset of hand images with labelled fingertip positions. I then trained a YOLOv8n model using this dataset via Roboflow, selecting the nano variant for its speed-accuracy balance — fast enough for real-time inference without requiring GPU hardware on the end user's device.

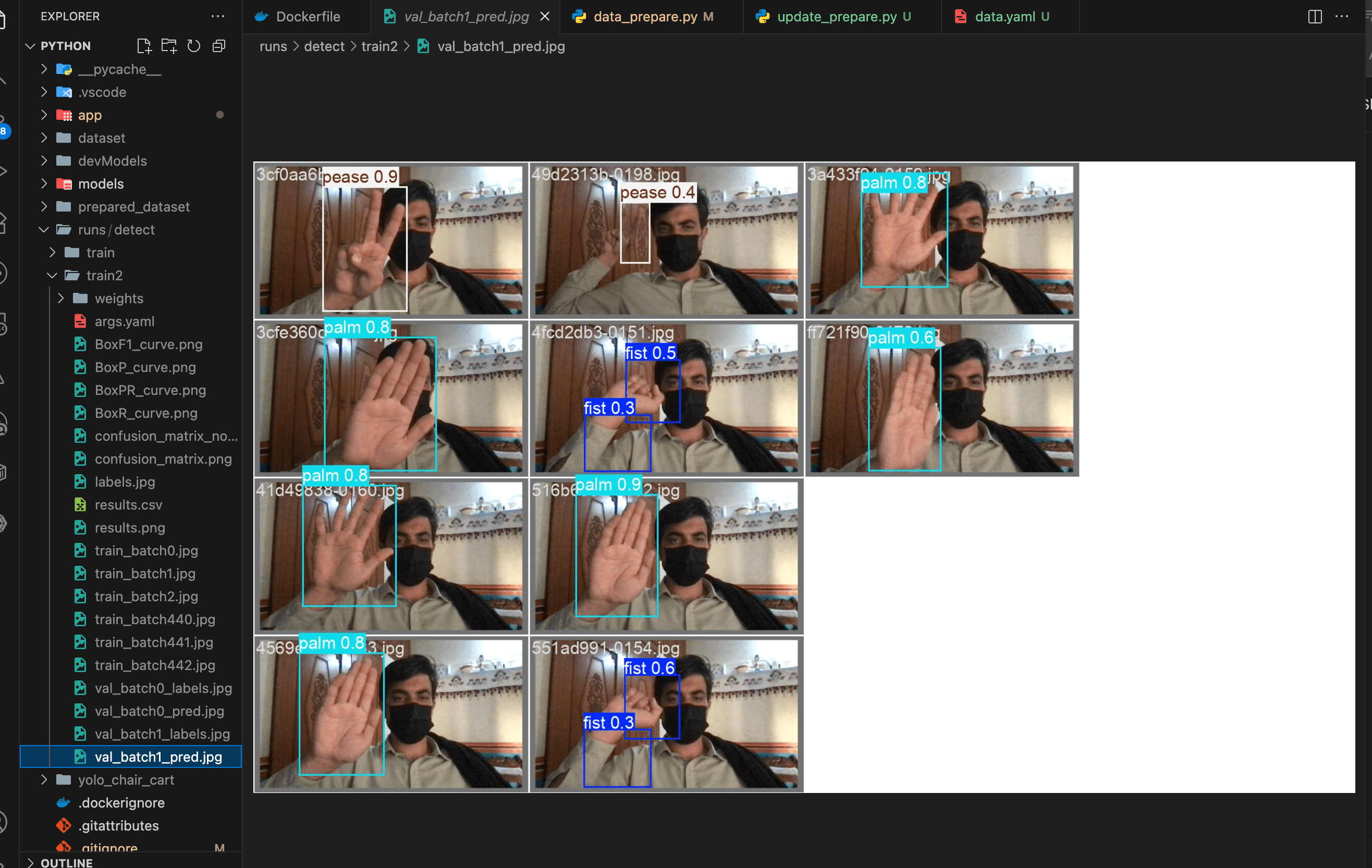

The trained model was deployed as a FastAPI endpoint hosted on Hugging Face Spaces, which provided free GPU-accelerated inference hosting. The API accepts uploaded or captured hand images, runs YOLOv8 inference, and returns both the detected finger count and an annotated image showing the detection bounding boxes.

The React.js frontend displays the result as a row of five bulbs that light up dynamically based on the detected count — one bulb per finger — with smooth CSS transitions and full light/dark theme support. This visual metaphor made the AI output immediately intuitive without technical explanation, demonstrating how to make computer vision results accessible to non-technical users.